Google’s AI research lab, DeepMind, announced Genie 3 last August, showing off an AI system that can generate interactive virtual environments in real time. Now, Google has released an experimental prototype that Google AI subscribers can try starting today. Granted, we still can’t generate VR worlds on the fly, but we’re getting tantalizingly close.

news

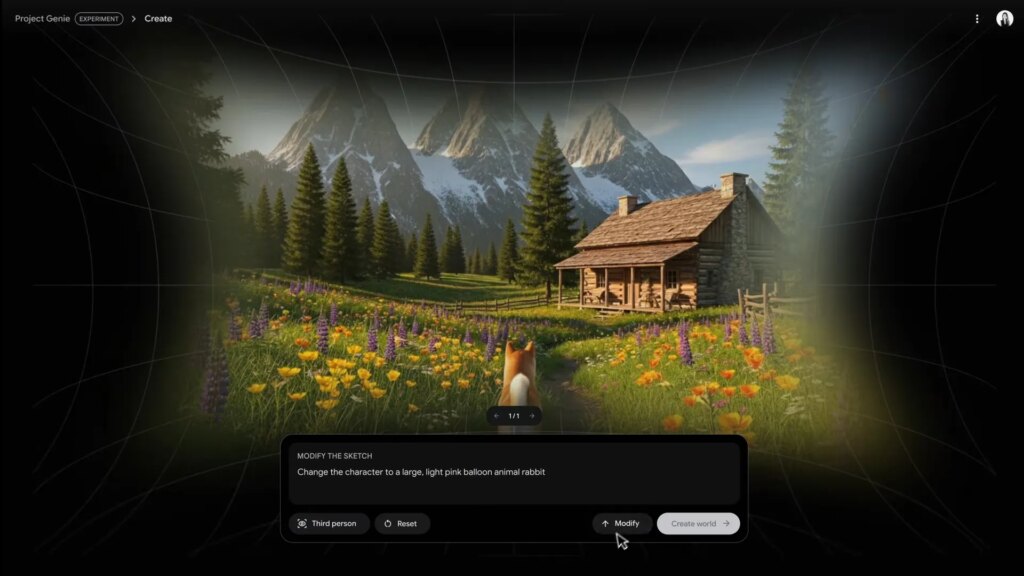

Project Genie is what Google calls an “experimental research prototype,” so it’s not yet some dreamy “AI gaming console.” Essentially, it allows users to create, explore, and modify interactive virtual environments through a web interface.

This system is very similar to previous image and video generators that require you to enter text prompts and upload reference images, but Project Genie takes this a few steps further.

Project Genie has not one, but two main prompt boxes: one for the environment and one for the character. The third prompt box also allows you to change the initial appearance of the environment before fully generating it (such as making the sword bigger or changing the fall time of the tree).

Google said in a blog post that Project Genie has limitations as an early research system. Generated environments may not closely match real-world physics or prompts, character control may be inconsistent, sessions are limited to 60 seconds, and some previously announced features are not yet included.

And for now, you can only output videos of your experience, but you can explore and remix other “worlds” available in the gallery.

Project Genie is currently being rolled out to Google AI Ultra subscribers ages 18 and older in the United States, and will be made more widely available at some point in the future. Click here for more information.

my view

There are a lot of hurdles to overcome before we see something like Project Genie running on VR headsets.

One of the most important hurdles to overcome is undoubtedly cloud streaming. Frankly, while cloud gaming exists on VR headsets, it’s not great right now because the latency is highly variable depending on how close you are to the service’s data center. That and today’s cloud gaming giants (i.e. NVIDIA GeForce Now, Xbox Cloud Gaming) are generally aimed at flatscreen gaming. When it comes to rendering and input latency, they have a much lower bar than VR headsets, which typically require up to 20ms of motion-to-photon latency to avoid user discomfort.

This also doesn’t take into account that Project Genie must somehow render the world with stereopsis in mind. The system technically requires two different viewpoints to be resolved into one stereoscopic 3D image, which can pose its own problems.

As far as I understand, the world model created in Project Genie is probabilistic. That is, the object may behave slightly differently each time. This is one reason why Genie 3 can only support up to a few minutes of continuous interaction at a time. Genie 3’s world generation has a tendency to deviate from the prompts, possibly resulting in undesirable results.

So while it’s unlikely we’ll see a VR version of this in the very near future, I’m excited to see the small steps that will lead to where it ends up. The idea of being able to casually explore the world, holodeck-style, on the fly, whether past, present, or fiction of your choice, sounds very interesting from a learning perspective. One of the VR apps I’ve used the most so far is Google Earth VR. I can only imagine a more detailed and vibrant version, useful for learning foreign languages, time travel, and virtual world travel.

But before we get there, there’s a clear chance that the Internet will be overrun by “gaming slop.” This is like an extreme asset flip. Game developers could also be exposed to the same struggles other digital artists currently face when it comes to sampling and recreating copyrighted works with AI, albeit on a whole new level (GTA VI anyone?).

And I can’t shake the feeling that the future is shaping up to be a very strange, but hopefully very interesting and not completely scary place. I can imagine a future where photorealistic AI-driven environments work with brain-computer interfaces (BCI), two subjects that Valve has been researching for years, to provide the virtual reality I’m actually waiting for.